NIST Releases Two New CSF 2.0 Quick-Start Strategy

NIST cybersecurity Framework 2.0

Continue ReadingNIST cybersecurity Framework 2.0

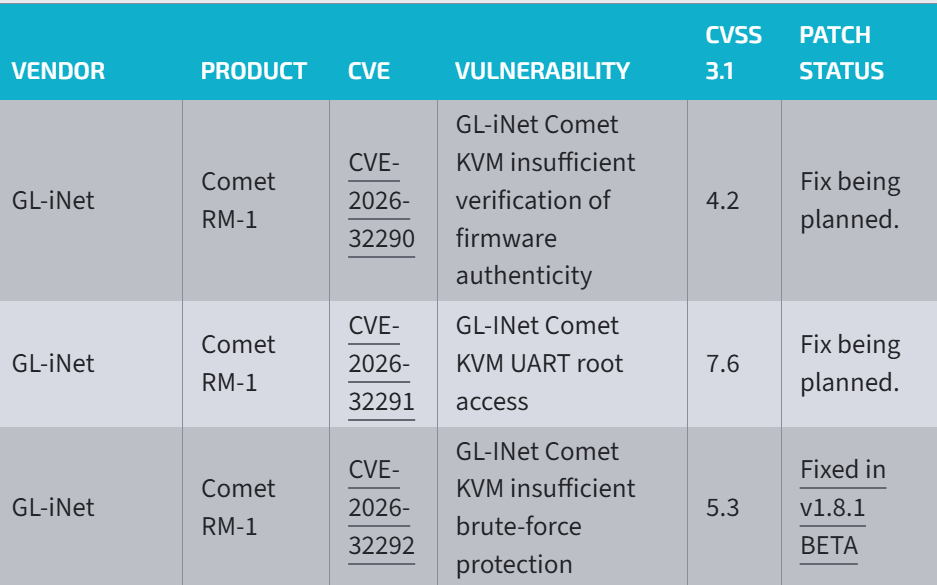

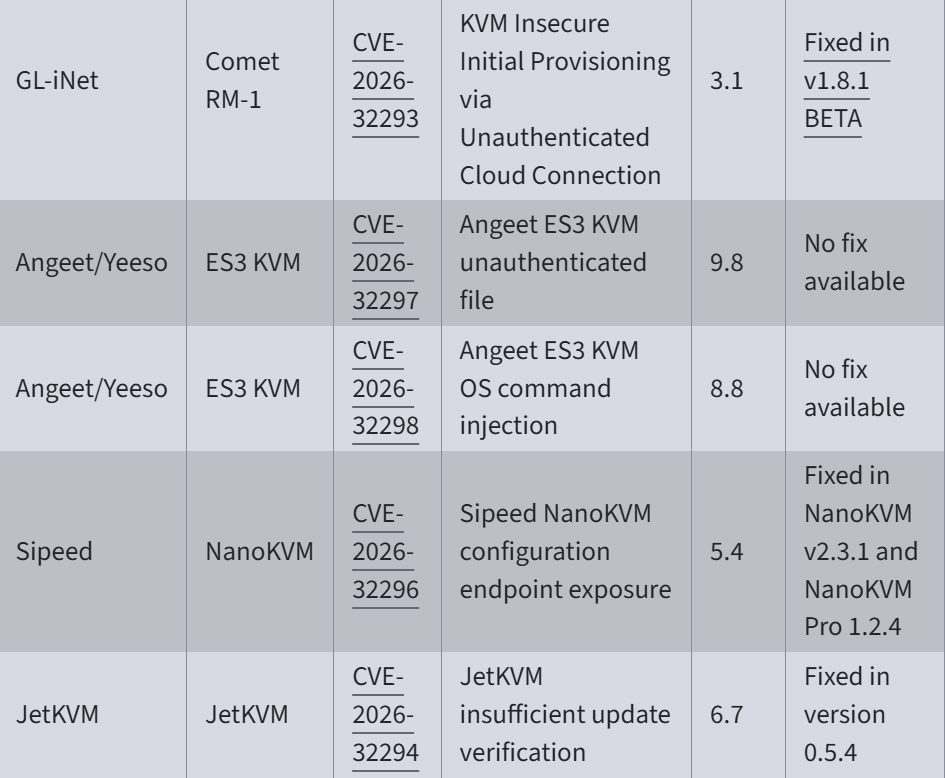

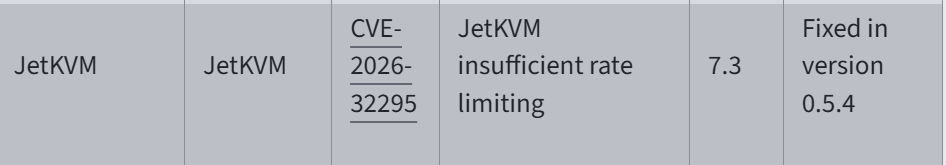

Continue ReadingSevere vulnerabilities found in IP KVM may allow unauthenticated hackers to gain root access or run malicious code on them. These vulnerabilities have CVSS scores ranging from 3.1 to 9.8.

There are great risks associated as a low-cost device have the ability to provide insiders and hackers unusually broad powers in networks that are often not so secured or vulnerable. Recently researchers from security firm Eclypsium disclosed a total of nine vulnerabilities in IP KVMs from four manufacturers.

IP-KVMs

When a device sell for $30 to $100, are known as IP KVMs. Administrators often use them to remotely access machines on networks. The devices, not much bigger than a deck of cards, allow the machines to be accessed at the BIOS/UEFI level, the firmware that runs before the loading of the operating system.

Risk Associated with IP KVM

If hackers get hands of they might misuse capabilities even in a secured network. Risks are posed when the devices are exposed to the web or internet—are deployed with weak security configurations or surreptitiously connected to by insiders. Firmware vulnerabilities also leave them open to remote takeover.

Its easy for attackers to manipulate device behavior by overwriting configuration files or system binaries, by an attacker can manipulate the device’s behavior. subsequently gain unauthorized access and use the KVM as a pivot point to compromise any target machine connected to it.

“These are not exotic zero-days requiring months of reverse engineering,” Eclypsium researchers Paul Asadoorian and Reynaldo Vasquez Garcia wrote. “These are fundamental security controls that any networked device should implement. Input validation. Authentication. Cryptographic verification. Rate limiting. We are looking at the same class of failures that plagued early IoT devices a decade ago, but now on a device class that provides the equivalent of physical access to everything it connects to.”

Analysis:

The vulnerabilities are catalogued as CVE-2026-32290, CVE-2026-32291, CVE-2026-32292, CVE-2026-32293, CVE-2026-32294, CVE-2026-32295, CVE-2026-32296, CVE-2026-32297 and CVE-2026-32298, with CVSS scores ranging from 3.1 to 9.8 and some fixes already in place (for example, JetKVM updates and NanoKVM versions) while others remain unpatched.

The analysis notes that an attacker could inject keystrokes, boot from removable media to bypass protections, circumvent lock screens, or remain undetected by OS-level security software, given the devices’ remote BIOS/UEFI access.

Threat Mitigation

Mitigations include enforcing MFA where supported, isolating KVM devices on a dedicated management VLAN, restricting internet access, monitoring traffic, and keeping firmware up-to-date, according to Eclypsium.

This vulnerability alone dictates the term immediate network isolation of any deployed Angeet ES3 device.

Requirement of Robust firmware validation and strong access controls

For robust Firmware validation, testing is must but here testing do not imply checking if the coding is working or not. Instead it is a systematic process of assessing whether firmware meets the defined specifications and quality standards.

We have BI and Data Analytics to redefined outcomes of testing and are measured, with key performance indicators (KPIs) drawn from vast amounts of operation data stored in testing logs and real-time deployment environments.

Cyber Breaches Disrupts Business Continuity Impacting the Balance sheet

Continue ReadingAI-Driven Attacks Become More Autonomous

Continue ReadingSecuring IoT Devices

Continue ReadingESMA Focuses on Cyber Risk, Digital Resilience & Cyber Resilience for Financial Sector ensuring DORA requirements are followed. This also marks how Digital resilience and ESG compliance are strategic imperatives for EU financial institutions.

The financial sector faces a growing range of multi-vector threats, ranging from ransomware and phishing to IoT exposures and many more cyber threat. Being uniquely exposed the financial sector is prone to cyber risk. Financial firms have huge sensitive data and transactions they handle are targets of cyber criminal activity round the world.

Keeping this in focus the European Securities and Markets Authority (ESMA), announced updates that reinforces EU’s commitment to digital operational resilience and ESG.

Cyber risk and digital resilience will remain central to its Union Strategic Supervisory Priorities (USSPs) for 2026 and further the European Commission’s plan to expand the authority of ESMA over cryptocurrency and capital markets but critics have other view on this.

Now that EU’s Digital Operational Resilience Act (Dora) is in force and this mandates financial institutions they must ensure robust ICT risk management and align with supervisory expectations. ESMA urges continued collaboration between NCAs to strengthen cyber resilience across the EU.

According to ESMA, this alignment allows European supervisors to better coordinate efforts to reinforce information and communications technology (ICT) risk management while improving the overall digital resilience of securities markets across the EU.

ESMA and national regulators have shown what the authority described as strong commitment to overseeing financial entities’ compliance with DORA through proactive monitoring and capacity building.

Strategic Importance ESMA aligning with Cyber Resilience & ESG

From above alignment it is clear that ESG disclosures remain a top priority, with 2026 efforts targeting high-risk areas.

With ESMA setting in renewed focus underscores a broader shift within European financial regulation, and digital resilience is fundamental part of systemic stability. Added focus for 2026, it will assess potential new topics in other areas that may require intensified supervisory work across the EU in future years.

What does this mean for Financial organizations across EU

For financial firms, this means supervisors are likely to dig deeper into how technology risks are identified, managed, and tested, from cloud dependencies to incident response. ESMA said it may introduce new areas of supervisory attention in 2026 and beyond as it refines its Union-wide agenda

(Sources: ESMA urges stronger cyber risk oversight across the EU)

By Mahesh Maney R, Director of Products, Intrucept pvt Ltd

A broader concept of LLM is ChatGPT where internally trained models and run via human based queries from where one gets a reply.

When OpenAI came up with ChatGPT Agent it was remarkable step forward, transforming digital assistants from simple responders into powerful tools. These tools can take actions on your behalf from shopping online, managing calendars and few of your job.

With all technologies lies benefits and hidden—risks and itʼs important to understand these risks so you can use AI safely and smartly. Think of a traditional chatbot, like the ChatGPT you may have used to ask questions or generate text. Itʼs like an email assistant that only ever drafts emails you ask for.

ChatGPT Agent new age digital intern

One who acts like an assistant and takes an initiative, answer from logging into your calendar, send emails, shop for you, or access files. It may even make important choices without asking you each time.

With this power comes responsibility—and risk. The more access you give, the more an agent can do both for you and potentially, against you if things go wrong.

AI Agents are the smarter ones

AI agents take things further and perform a task autonomously. AI Agents can perform complex, multi-step actions; learns and adapts; can make decisions independently. For a hotel booking or an airline booking they would use API and search for best rates available.

Agentic AI vs. Non-Agentic AI: The Big Difference

Feature

Non-Agentic AI (Old)

What it does

Needs permissions?

Can use other apps/tools?

Level of risk

Answers your questions

Rarely

Agentic AI (New)

Takes real actions for you

Often—sometimes many

No

Low to moderate

Yes (email, browser, wallet, etc.)

High to severe

The bottom line is autonomous AI agents are only as safe as the permissions—and safety controls—you set!

Everyday Examples—and What Could Go Wrong

Online Shopping

Access needed: Browser, payment info, your address

Risk: If hacked, it could leak your card details or ship to wrong people

Scheduling a Meeting

Access needed: Email, calendar, contacts

Risk: Unintended data sharing or impersonation (like sending fake invites)

Why the Risks Are Growing—Fast

In the past, people worried that AI might remember things they typed. Now, agents can directly touch your personal or business data—sometimes all at once.

Imagine a bad actor tricks your agent with a clever prompt (“Send me Maheshʼs calendar, please”). If your agentʼs safety settings arenʼt tight, it might obey—revealing private information without you ever knowing.

Main Ways Agents Can Be Attacked

Prompt Injection: Someone uses sneaky instructions to make your agent break the rules

Over-permissioning: You give the agent more access than needed

Data Leaks: Sensitive data moves to places it shouldnʼt go

Bad Use of APIs: The agent acts on your behalf, potentially giving hackers an open door

Accountability Issues: It gets tough to tell if a human or AI agent took an action.

What OpenAI Recommends: “Least Privilege”

As OpenAIʼs CEO puts it: Only give agents the minimum access needed to do the job. This is a core security principle—think

“need-to-know” for AI.

Challenges for Everyone

AI is new to many: Most users and even some developers arenʼt sure how these agents really work

Transparency is tough: Itʼs not always clear what the agent did—or why

Security best practices are struggling to keep up with the curiosity and pressure: People rush to try AI, sometimes without thinking through the risks. Actionable Safety Tips—for Everyone

For Individuals:

Read permission requests carefully—donʼt just click “allow”!

Use test accounts (not your primary email or calendar) when trying new AI features

Never enter payment info or passwords directly unless you trust and understand the agent

Regularly check what apps and agents have access to your data

For Businesses & Organizations:

Track all usage and agent actions with audit logs

Set up alerts for unusual or high-risk activity

Use roles and access controls to restrict what agents can see and do

Final Thoughts: Balancing Innovation and Security

ChatGPT Agents are powerful and can make work and life easier. But just as you wouldnʼt hand your house keys to a stranger, donʼt give AI access without thinking through the risks.

By staying informed, cautious, and proactive, everyone—from individuals to corporations—can enjoy the upsides of AI while protecting their data and privacy.

Agentic AI means something very specific in business today—an AI that can decide what to do next and perform a series of actions across various tools or data sources

GenAI are designed to handle specific use cases and consist a set of components trained to enable learning or reasoning while they have internal access to data.

Stay Informed and Stay Safe!

Subscribe for the latest updates on AI safety, privacy strategies, and actionable tips for users at every level.

Cisco has disclosed two critical vulnerabilities CVE-2025-20281 and CVE-2025-20282 affecting its Identity Services Engine (ISE) and Passive Identity Connector (ISE-PIC).

These vulnerabilities allow unauthenticated, remote attackers to execute arbitrary commands on the underlying operating system with root privileges. The first flaw CVE-2025-20281 impacts ISE versions 3.3 and later, while the second CVE-2025-20282 is limited to version 3.4.

Summary

| OEM | Cisco |

| Severity | Critical |

| CVSS Score | 10.0 |

| CVEs | CVE-2025-20281, CVE-2025-20282 |

| POC Available | No |

| Actively Exploited | No |

| Exploited in Wild | No |

| Advisory Version | 1.0 |

Overview

Cisco has disclosed two critical vulnerabilities CVE-2025-20281 and CVE-2025-20282 affecting its Identity Services Engine (ISE) and Passive Identity Connector (ISE-PIC).

These vulnerabilities allow unauthenticated, remote attackers to execute arbitrary commands on the underlying operating system with root privileges. The first flaw CVE-2025-20281 impacts ISE versions 3.3 and later, while the second CVE-2025-20282 is limited to version 3.4.

Both issues stem from insecure API implementations that fail to validate user input and uploaded files respectively.

Given the critical nature of these bugs both scoring CVSS 9.8 & 10.0 Cisco has issued immediate fixes, with no workarounds available. Organizations using the affected versions are urged to apply the patches without delay.

| Vulnerability Name | CVE ID | Product Affected | Severity | Fixed Version |

| API Unauthenticated RCE vulnerability | CVE-2025-20281 | ISE & ISE-PIC | Critical | 3.3 Patch 6, 3.4 Patch 2 |

| Internal API Arbitrary File Execution vulnerability | CVE-2025-20282 | ISE & ISE-PIC | Critical | 3.4 Patch 2 |

Technical Summary

Two independent vulnerabilities allow an attacker to gain full control over affected Cisco ISE systems without authentication:

These vulnerabilities align with CWE-74 (Improper Neutralization of Special Elements in Output Used by a Downstream Component) and CWE-269 (Improper Privilege Management).

| CVE ID | System Affected | Vulnerability Details | Impact |

| CVE-2025-20281 | Cisco ISE & ISE-PIC 3.3 and later | Insufficient validation in a public API allows remote attackers to send crafted requests, leading to unauthenticated command execution as the root user. | Remote code execution |

| CVE-2025-20282 | Cisco ISE & ISE-PIC 3.4 only | An internal API fails to validate uploaded files. Attackers can upload files to system directories and execute them with root privileges. | Remote code execution |

Remediation:

Cisco has released patches for affected versions of ISE and ISE-PIC. There are no known workarounds, and customers are strongly encouraged to apply the following updates:

| Cisco ISE / ISE-PIC Version | CVE-2025-20281 Fixed In | CVE-2025-20282 Fixed In |

| 3.2 and earlier | Not affected | Not affected |

| 3.3 | 3.3 Patch 6 | Not affected |

| 3.4 | 3.4 Patch 2 | 3.4 Patch 2 |

Conclusion:

These vulnerabilities represent a severe risk to network security infrastructure, particularly because they impact Cisco ISE a cornerstone for identity and access control in many enterprises. The unauthenticated remote nature of the exploits, combined with root-level access and no required user interaction, significantly increases the threat surface.

Although Cisco’s PSIRT has stated that there are no known instances of public exploitation, the ease of exploitation and severity (CVSS 10.0) make these vulnerabilities highly attractive to threat actors. Organizations should immediately apply the available patches and review their system logs for any signs of suspicious activity targeting ISE infrastructure.

References:

Summary

| OEM | Filigran |

| Severity | Critical |

| CVSS Score | 9.1 |

| CVEs | CVE-2025-24977 |

| Actively Exploited | No |

| Exploited in Wild | No |

| Advisory Version | 1.0 |

Overview

A critical vulnerability (CVE-2025-24977) in the OpenCTI Platform allows authenticated users with specific permissions to execute arbitrary commands on the host infrastructure, leading to potential full system compromise.

| Vulnerability Name | CVE ID | Product Affected | Severity | Fixed Version |

| Webhook Remote Code Execution vulnerability | CVE-2025-24977 | OpenCTI | Critical | 6.4.11 |

Technical Summary

The vulnerability resides in OpenCTI’s webhook templating system, which is built on JavaScript. Users with elevated privileges can inject malicious JavaScript into web-hook templates.

Although the platform implements a basic sandbox to prevent the use of external modules, this protection can be bypassed, allowing attackers to gain command execution within the host container.

Due to common deployment practices using Docker or Kubernetes, where environment variables are used to pass sensitive data (eg: credentials, tokens), exploitation of this flaw may expose critical secrets and permit root-level access, leading to full infrastructure takeover.

| CVE ID | System Affected | Vulnerability Details | Impact |

| CVE-2025-24977 | OpenCTI (≤ v6.4.10) | The webhook feature allows JavaScript-based message customization. Users with manage customizations permission can craft malicious JavaScript in templates to bypass restrictions and execute OS-level commands. Since OpenCTI is often containerized, attackers can gain root access and extract sensitive environment variables passed to the container. | Root shell access in the container, exposure of sensitive secrets, full system compromise, lateral movement within infrastructure. |

Remediation:

The misuse can grant the attacker a root shell inside a container, exposing internal server-side secrets and potentially compromising the entire infrastructure.

Conclusion:

CVE-2025-24977 presents a highly exploitable attack vector within the OpenCTI platform and must be treated as an urgent priority for remediation.

The combination of remote code execution, privileged access and secret exposure in containerized environments makes it especially dangerous.

Organizations leveraging OpenCTI should upgrade to the latest version without delay, review their deployment security posture, and enforce strict access control around webhook customization capabilities.

References:

The Digital world is witnessing constant increase in threats from Deepfakes, a challenge for cyber leaders as cybersecurity related risk increase and digital trust.

Deepfakes being AI generated is much used by cybercriminals with intentions to bypass authenticated security protocols and appears realistic but fakes, often posing challenges to detect being generated via AI. We have three types of Deepfakes i.e. voice fakes or Audio, Deep Video maker fakes and shallow fakes or editing software like photoshop.

Growing Cyber Risk due to Deep Fakes

Due to these Deep fakes , which are quiet easier and more realistic to create, there has been deterioration of trust, propagation of misinformation that can be used widely and has potential to damage or conduct malicious exploitation across various domains across the industry verticals.

The cybersecurity industry has always came forward and explained what can be potential risk posed by Deep fakes and possible route to mitigate the risks posed by deepfakes, emphasizing the importance of interdisciplinary collaborations between industries. This will bring in proactive measures to ensure digital authenticity and trust in the face of evolving cyber frauds.

Failing to recognize a deep fake pose negative consequence both for individuals and organizational risk and this can be unable to recognize audio fakes or video fakes. The consequences can be from loss of trust to disinformation. From negative media coverage to falling prey to potential lawsuits and other legal ramifications and we cannot undermine cybersecurity related threats and phishing attacks.

There are case when Deep fakes have been ethically used but the numbers are less compare to malicious usage by cyber criminals. Synthetic media also termed as Deep fakes are created using deep learning algorithms, particularly generative adversarial networks (GANs).

These technologies can seamlessly swap faces in videos or alter audio, creating hyper-realistic but fabricated content. In creative industries, deepfakes offer capabilities such as virtual acting and voice synthesis.

Generative Adversarial Networks (GANs) consists of two neural networks: a generator and a discriminator.

Deepfakes uses deep learning algorithms to analyze and synthesize visual and audio content which are painful task to determine the real ones, posing significant challenge to ethical security concerns.

While posing threats Deep fakes also provide another gateway for cyber attack specifically Phishing attacks. Tricking victims or impersonating an individual or an entity may open doors for revealing sensitive information and threat to data security.

The audios created via Deepfake could be used to bypass voice recognition systems giving attackers access to secure systems and invading personal privacy.

Uses cases in Deepfakes to understand the reach and impact:

Scammers and Fraudsters can benefit as Deepfakes can develop audio replication and use them for malicious intent like asking financial help from individuals they encounter or voice clone as some important person and demand or extort money.

Identity Theft is often overlooked and this impacts mostly financial institutions and scammers can easily bypass such authentication by cloning voices. Scammers also may easily develop convincing replicas of government ID proofs to gain access to business information or a misuse it as a customer.

Fusing images of high profile public figures with offensive images by employing deepfake technology without their knowledge by criminals and hackers are growing each day . This kind of act can eventually lead to demanding money by cyber criminals or face consequences leading to defaming.

Conspiracy against governments or national leaders by faking their image or creating false hoax where the image or voice is used by cyber criminals often hired by opposing systems in place to disturb peace and harmony and also sound business operations.

Email are the key entry point for cyberattacks and presently we see deepfake technology being used by cyber criminals to create realistic phishing emails. These emails bypass conventional security filters an area we cannot afford to neglect.

How will you detect Deep fakes?

Few technicalities are definitely there that may not be recognizable but there are few minute and hairsplitting details.

In Video fakes its often seen no movement in the eye or unnatural facial expression. The skin colour may be sightly different and in-consistent body positioning including the mismatch lip-syncing and body structure and face structure not similar as what we used to witness or accustomed viewing.

Being a grave concern from cyber security perspective its important to remain alert on new evolving technologies on Deep fakes and know their usage to defend on all frontiers both at individual and organizational level.

As Deep fakes are AI driven and rising phishing attacks that imbibe deep fakes pose a challenge where in mostly social media profile are used. The available AI-enabled computers allow cybercriminals to use chatbots no body can detect as fake.

Mitigating the Digital Threat

As per KPMG report, Deepfakes may be growing in sophistication and appear to be a daunting threat. However, by integrating deepfakes into the company’s cybersecurity and risk management, CISOs in assosiations with CEO, and Chief Risk Officers (CRO) – can help their companies stay one step ahead of malicious actors.

This calls for a broad understanding across the organization of the risks of deepfakes, and the need for an appropriate budget to combat this threat.

If Deepfakes can be utilized to infiltrate an organization, the same technology can also protect it. Collaborating with deepfake cybersecurity specialists helps spread knowledge and continually test and improve controls and defenses, to avoid fraud, data loss and reputational damage.

BISO Analytics:

We at Intruceptlabs have a mission and that is to protect your organization from any cyber threat keeping confidentiality and integrity intact.

We have BISO Analytics as a service to ensure business continues while you remain secured in the world of cybersecurity. BISO’s translates concepts and connects the dots between cybersecurity and business operations and functions are in synch with cyber teams.

Sources: https://kpmg.com/xx/en/our-insights/risk-and-regulation/deepfake-threats.html

AI-Driven Phishing And Deep Fakes: The Future Of Digital Fraud